import logging

from datetime import datetime

import scrapy

from scrapy.linkextractors import LinkExtractor

from scrapy.spiders import CrawlSpider, Rule

from scrapy import signals

from selenium import webdriver

from scrapy.xlib.pydispatch import dispatcher

import json

class LagouSpider(CrawlSpider):

name = 'lagou_test'

allowed_domains = ['www.lagou.com']

start_urls = ['https://www.lagou.com']

headers = {

'User-Agent': 'Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/60.0.3112.113 Safari/537.36',

}

rules = (

Rule(LinkExtractor(allow=("www.lagou.com/zhaopin/",)), follow=True,callback='parse_job'),

)

def __init__(self):

self.browser = webdriver.Chrome(executable_path="E:/tmp/chromedriver.exe")

super(LagouSpider, self).__init__()

dispatcher.connect(self.spider_closed, signals.spider_closed)

def spider_closed(self, spider):

# 当爬虫退出的时候退出chrome

print("spider closed")

self.browser.quit()

def get_cookie_from_cache(self):

import os

import pickle

import time

cookie_dict = {}

for parent, dirnames, filenames in os.walk('H:/scrapy/ArticleSpider/cookies/lagou'):

for filename in filenames:

if filename.endswith('.lagou'):

print(filename)

with open('H:/scrapy/ArticleSpider/cookies/lagou/' + filename, 'rb') as f:

d = pickle.load(f)

cookie_dict[d['name']] = d['value']

return cookie_dict

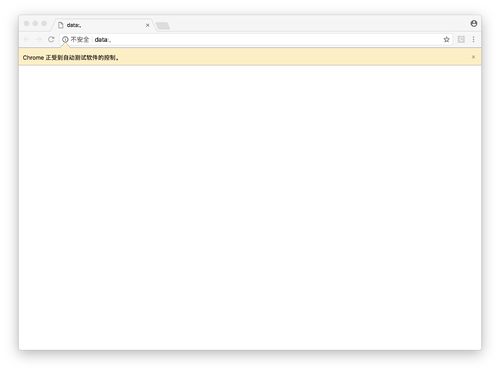

def start_requests(self):

cookie_dict = self.get_cookie_from_cache()

self.browser.get("https://passport.lagou.com/login/login.html")

self.browser.find_element_by_css_selector("div:nth-child(2) > form > div:nth-child(1) > input").send_keys(

"")

self.browser.find_element_by_css_selector("div:nth-child(2) > form > div:nth-child(2) > input").send_keys(

"")

self.browser.find_element_by_css_selector(

"div:nth-child(2) > form > div.input_item.btn_group.clearfix > input").click()

import time

time.sleep(10)

Cookies = self.browser.get_cookies()

print(Cookies)

cookie_dict = {}

import pickle

for cookie in Cookies:

# 写入文件

f = open('H:/scrapy/ArticleSpider/cookies/lagou/'+cookie['name'] + '.lagou', 'wb')

pickle.dump(cookie, f)

f.close()

cookie_dict[cookie['name']] = cookie['value']

return cookie_dict

#

#

# jsonCookies = json.dumps(Cookies)

# cookie = json.loads(jsonCookies)

# self.cookie = cookie

# import requests

# response = requests

# headers = {

# 'Accept':'text/html,application/xhtml+xml,application/xml;q=0.9,image/webp,*/*;q=0.8',

# 'Accept-Encoding':'gzip, deflate, sdch, br',

# 'Accept-Language': 'zh-CN, zh;q = 0.8',

# 'Cache-Control': 'max-age = 0',

# 'Connection': 'keep-alive',

# 'Cookie':'user_trace_token=20170421093424-4de1545e1533450998cfd49656ffa6e8; LGUID=20170421093426-a79b63ec-2632-11e7-8615-525400f775ce; __guid=237742470.2521632498681821700.1508290616954.1816; JSESSIONID=ABAAABAAADEAAFI58C0C15D474804D8985D47B510C54153; _gat=1; PRE_UTM=; PRE_HOST=; PRE_SITE=; PRE_LAND=https%3A%2F%2Fwww.lagou.com%2F; X_HTTP_TOKEN=0b9e8cdd785bac4b932ffbbaecf53a20; _putrc=151A76E83B6D3EDF; login=true; unick=%E6%9C%AC%E5%90%8D; showExpriedIndex=1; showExpriedCompanyHome=1; showExpriedMyPublish=1; hasDeliver=2; index_location_city=%E4%B8%8A%E6%B5%B7; monitor_count=3; _ga=GA1.2.175533001.1492738464; Hm_lvt_4233e74dff0ae5bd0a3d81c6ccf756e6=1508290599,1508900376; Hm_lpvt_4233e74dff0ae5bd0a3d81c6ccf756e6=1508900402; LGSID=20171025105935-88397720-b930-11e7-9613-5254005c3644; LGRID=20171025110000-973a85ae-b930-11e7-a797-525400f775ce',

# 'Host': 'www.lagou.com',

# 'Upgrade-Insecure-Requests': 1,

# 'User-Agent': 'Mozilla/5.0(Windows NT 6.1; WOW64) AppleWebKit/537.36(KHTML, like Gecko) Chrome/55.0.2883.87 Safari/537.36'

# }

# r = requests.get(self.start_urls[0], headers=headers, cookies=cookie_dict)

return [scrapy.Request(url=self.start_urls[0], cookies=cookie_dict, callback=self.parse)]

def parse_job(self, response):

# 解析拉勾网的职位

logging.info(u'-------------消息分割线-------------')

response_text = response.text

return

# return scrapy.Request(url=self.start_urls[0], cookies=self.start_urls, callback=self.after_login)这里是之前有个同学想要通过selenium模拟登录拉勾 然后获取到cookie之后交给scrapy进行爬取, 你可以看看这个 源码 我测试过没有问题