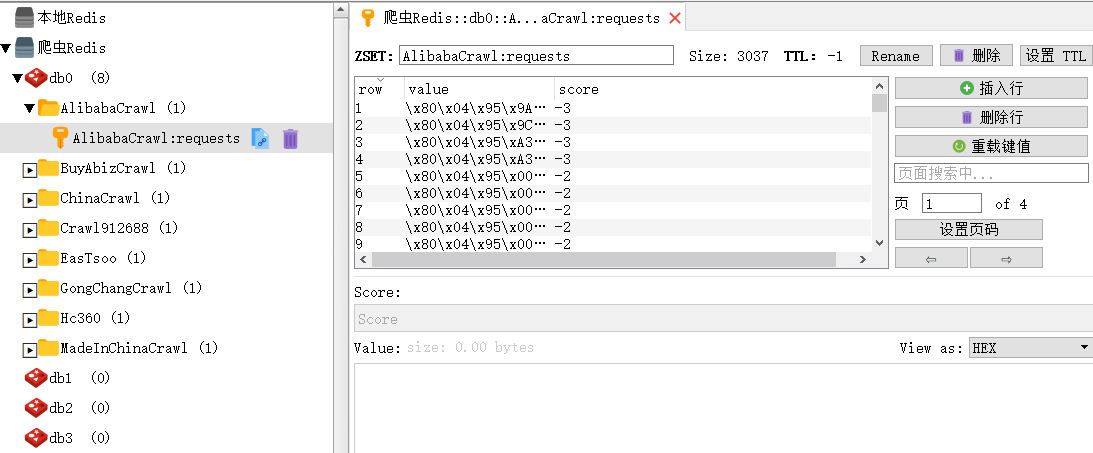

使用crawlspider模板做分布式为什么入库速度非常慢?数据也很少?

【爬虫文件】

# -*- coding: utf-8 -*-

import scrapy

from scrapy.linkextractors import LinkExtractor

from scrapy.spiders import Rule, CrawlSpider

from scrapy_redis.spiders import RedisCrawlSpider

from items import BuyinfoItem,SellinfoItem,CompanyinfoItem

from utils.common import suiji_str, html_geshi, zhsha256, img_random, date_Handle, address_Handle,qq_Handle,url_qian, \

Imgdloss, add_requests, list_extract, price_Handle

class EastsooSpider(RedisCrawlSpider):

name = 'EasTsoo'

allowed_domains = ['www.eastsoo.com']

redis_key = '{0}:start_urls'.format(name)

rules = (

# 采购

Rule(LinkExtractor(allow=r"buy/[\w|-]+\.html$"), callback='buy_html', follow=True),

# 供应

Rule(LinkExtractor(allow=r"buyoffer/\w+\.html$"), callback='sell_html', follow=True),

# 公司

Rule(LinkExtractor(allow=r"www\.eastsoo\.com/u\d+($|/$)"), callback='com_html', follow=True),

)

def sell_html(self, response):

# 供应

tong = response.xpath("//div[@class='buy_top line']")

if tong:

es_id = suiji_str()

title = response.xpath("//head/title/text()").extract_first("")

tags = response.xpath("//meta[@name='keywords']/@content").extract_first("")

content = response.xpath("//meta[@name='description']/@content").extract_first("")

htmltext = html_geshi(response.xpath("//body").extract_first(""))

content += htmltext

img = img_random()

if img:

img_url = Imgdloss(response.xpath("//div[@class='x4 buy_top_pic']//img/@src").extract_first("")).xiazai()

else:

img_url = ""

company = response.xpath("//div[@class='buy_company']/div/a/text()").extract_first("").strip()

city = response.xpath("//ul[@class='buy_top_message']//font[contains(text(),'所在地区:')]/following-sibling::text()").extract_first("").replace(" ", "·")

xurl = response.xpath("//a[@class='button radius-small bg-main']/@href").extract_first("")

if xurl:

xphtml = add_requests("http://www.eastsoo.com", xurl)

tele = list_extract(xphtml.xpath("//td[contains(text(),'机:')]/following-sibling::td/text()"))

else:

tele = ""

price = price_Handle(response.xpath("//font[contains(text(),'当前价格:')]/following-sibling::span/text()").extract_first(""))

# 传递Item

SellInfo = SellinfoItem()

SellInfo['es_id'] = es_id

SellInfo['title'] = title

SellInfo['tags'] = tags

SellInfo['content'] = content

SellInfo['url'] = response.url

SellInfo['url_id'] = zhsha256(response.url)

SellInfo['img_url'] = img_url

SellInfo['company'] = company

SellInfo['city'] = city

SellInfo['tele'] = tele

SellInfo['price'] = price

yield SellInfo

def buy_html(self, response):

# 采购

tong = response.xpath("//dl[@class='buyoffer_content']")

if tong:

es_id = suiji_str()

title = response.xpath("//head/title/text()").extract_first("")

tags = response.xpath("//meta[@name='keywords']/@content").extract_first("").strip()

content = response.xpath("//meta[@name='description']/@content").extract_first("")

htmltext = html_geshi(response.xpath("//body").extract_first(""))

content += htmltext

url = response.url

url_id = zhsha256(url)

img_url = ""

company = response.xpath("//dl[@class='buyoffer_content']//li[contains(text(),'联系人:')]/text()").extract_first("").replace("联系人:", "")

fabu_date = date_Handle(response.xpath("//dl[@class='buyoffer_content']//span[contains(text(),'发布时间:')]/following-sibling::text()").extract_first(""))

# 传递Item

BuyInfo = BuyinfoItem()

BuyInfo['es_id'] = es_id

BuyInfo['title'] = title

BuyInfo['tags'] = tags

BuyInfo['content'] = content

BuyInfo['url'] = url

BuyInfo['url_id'] = url_id

BuyInfo['img_url'] = img_url

BuyInfo['company'] = company

BuyInfo['fabu_date'] = fabu_date

yield BuyInfo

def com_html(self,response):

# 公司

tong = response.xpath("//div[@class='width margin-top-big shop_index_top']")

if tong:

es_id = suiji_str()

title = response.xpath("//head/title/text()").extract_first("")

tags = response.xpath("//meta[@name='keywords']/@content").extract_first("").strip()

content = ""

htmltext = html_geshi(response.xpath("//body").extract_first(""))

content += htmltext

url = response.url

url_id = zhsha256(url)

img_url = Imgdloss(response.xpath("//dl[@class='shop_company_content']//img/@src").extract_first("")).xiazai()

company = response.xpath("//div[@class='shop_left_company_name']/text()").extract_first("")

xurl = response.xpath("//div[@id='top_menu']//a[contains(text(),'联系方式')]/@href").extract_first("")

xphtml = add_requests(xurl, '')

tongs = xphtml.xpath("//table[@class='table']")

if tongs:

contacts = list_extract(xphtml.xpath("//table[@class='table']//td[contains(text(),'联 系:')]/following-sibling::td/text()")).replace("先生","").replace("女士","").strip()

tele = list_extract(xphtml.xpath("//table[@class='table']//td[contains(text(),'电 话:')]/following-sibling::td/text()")).strip().replace("*","")

mobile = list_extract(xphtml.xpath("//table[@class='table']//td[contains(text(),'手 机:')]/following-sibling::td/text()")).strip().strip().replace("*","")

fax = "" # //td[contains(text(),'传真:')]/following-sibling::td/img/@src

address = list_extract(xphtml.xpath("//table[@class='table']//td[contains(text(),'地 址:')]/following-sibling::td/text()")).strip().strip().replace("*","")

qq = list_extract(xphtml.xpath("//table[@class='table']//td[contains(text(),'Q Q:')]/following-sibling::td/text()")).strip().strip().replace("*","")

wangwang = ""

ComInfo = CompanyinfoItem()

ComInfo['es_id'] = es_id

ComInfo['title'] = title

ComInfo['tags'] = tags

ComInfo['content'] = content

ComInfo['url'] = url

ComInfo['url_id'] = url_id

ComInfo['img_url'] = img_url

ComInfo['company'] = company

ComInfo['contacts'] = contacts

ComInfo['tele'] = tele

ComInfo['mobile'] = mobile

ComInfo['fax'] = fax

ComInfo['address'] = address

ComInfo['qq'] = qq

ComInfo['wangwang'] = wangwang

yield ComInfo

使用的是异步储存到Mysql

class MysqlTwistedpipline(object):

"""异步连接池插入数据库

::1、settings中要将MysqlTwistedpipline类写入ITEM_PIPELLINES当中

"""

def __init__(self,dbpool):

self.dbpool = dbpool

self.number = 0

self.erorr = 0

@classmethod

def from_settings(cls,settings):

dbparms = dict(

host = settings["MYSQL_HOST"],

port = settings["MYSQL_PORT"],

user = settings["MYSQL_USER"],

password = settings["MYSQL_PASSWORD"],

db = settings["MYSQL_DB"],

charset = "utf8",

cursorclass = MySQLdb.cursors.DictCursor,

use_unicode = True

)

dbpool = adbapi.ConnectionPool("MySQLdb",**dbparms)

return cls(dbpool)

def process_item(self, item, spider):

# 使用twisted将mysql插入变成异步执行

query = self.dbpool.runInteraction(self.do_insert, item)

self.number += 1

print("-" * 30, "\n执行【异步插入】pipeline\n第{0}条数据插入\n".format(self.number), "-" * 30)

query.addErrback(self.handle_error, item, spider) # 处理异常

def handle_error(self, failure, item, spider):

# 错误处理异步插入异常函数

self.erorr += 1

print(failure,item['url'])

print("-" * 30, "\n执行【异步插入】erorr\n第{0}条数据插入\n".format(self.erorr), "-" * 30)

def do_insert(self, cursor, item):

# 采购信息-执行具体的插入

insert_sql,params = item.get_insert_sql()

cursor.execute(insert_sql, params)

数据库中收到的数据很少

784

收起

正在回答 回答被采纳积分+3

2回答

Scrapy打造搜索引擎 畅销4年的Python分布式爬虫课

- 参与学习 5831 人

- 解答问题 6293 个

带你彻底掌握Scrapy,用Django+Elasticsearch搭建搜索引擎

了解课程