cmd中运行正常,Pycharm中无法运行

这是代码

from scrapy.cmdline import execute

import sys,os

sys.path.append(os.path.dirname(os.path.abspath(__file__)))

execute(['scrapy','crawl','jobbole'])

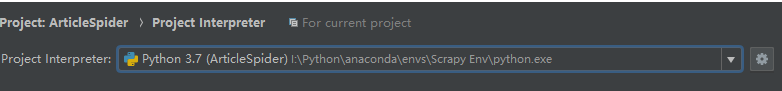

Pycharm内的terminal运行也是同样的错误,但是anaconda打开的terminal进入文件夹之后就不会报错,pycharm的 project interpreter也是同一个环境

运行之后报错

I:\PythonHighLevelScrapy\notes\ScrapyPoroject\ArticleSpider>scrapy crawl jobbole

2020-05-14 16:01:31 [scrapy.utils.log] INFO: Scrapy 1.6.0 started (bot: ArticleSpider)

Traceback (most recent call last):

File “I:\Python\anaconda\Scripts\scrapy-script.py”, line 10, in

sys.exit(execute())

File “I:\Python\anaconda\lib\site-packages\scrapy\cmdline.py”, line 149, in execute

cmd.crawler_process = CrawlerProcess(settings)

File “I:\Python\anaconda\lib\site-packages\scrapy\crawler.py”, line 254, in init

log_scrapy_info(self.settings)

File “I:\Python\anaconda\lib\site-packages\scrapy\utils\log.py”, line 149, in log_scrapy_info

for name, version in scrapy_components_versions()

File “I:\Python\anaconda\lib\site-packages\scrapy\utils\versions.py”, line 35, in scrapy_components_versions

(“pyOpenSSL”, _get_openssl_version()),

File “I:\Python\anaconda\lib\site-packages\scrapy\utils\versions.py”, line 43, in get_openssl_version

import OpenSSL

File "I:\Python\anaconda\lib\site-packages\OpenSSL_init.py", line 8, in

from OpenSSL import crypto, SSL

File “I:\Python\anaconda\lib\site-packages\OpenSSL\crypto.py”, line 16, in

from OpenSSL._util import (

File “I:\Python\anaconda\lib\site-packages\OpenSSL_util.py”, line 6, in

from cryptography.hazmat.bindings.openssl.binding import Binding

File “I:\Python\anaconda\lib\site-packages\cryptography\hazmat\bindings\openssl\binding.py”, line 14, in

from cryptography.hazmat.bindings._openssl import ffi, lib

ImportError: DLL load failed: 找不到指定的程序。

I:\PythonHighLevelScrapy\notes\ScrapyPoroject\ArticleSpider>

anaconda的terminal内运行不报错

(Scrapy Env) I:\PythonHighLevelScrapy\notes\ScrapyPoroject\ArticleSpider>scrapy crawl jobbole

2020-05-14 15:51:14 [scrapy.utils.log] INFO: Scrapy 1.6.0 started (bot: ArticleSpider)

2020-05-14 15:51:14 [scrapy.utils.log] INFO: Versions: lxml 4.5.0.0, libxml2 2.9.9, cssselect 1.1.0, parsel 1.5.2, w3lib 1.21.0, Twisted 20.3.0, Python 3.7.7 (default, May 6 2020, 11:45:54) [MSC v.1916 64 bit (AMD64)], pyOpenSSL 19.1.0 (OpenSSL 1.1.1g 21 Apr 2020), cryptography 2.9.2, Platform Windows-10-10.0.17134-SP0

2020-05-14 15:51:14 [scrapy.crawler] INFO: Overridden settings: {‘BOT_NAME’: ‘ArticleSpider’, ‘NEWSPIDER_MODULE’: ‘ArticleSpider.spiders’, ‘ROBOTSTXT_OBEY’: True, ‘SPIDER_MODULES’: [‘ArticleSpider.spiders’]}

2020-05-14 15:51:14 [scrapy.extensions.telnet] INFO: Telnet Password: 93272105fac220a1

2020-05-14 15:51:14 [scrapy.middleware] INFO: Enabled extensions:

[‘scrapy.extensions.corestats.CoreStats’,

‘scrapy.extensions.telnet.TelnetConsole’,

‘scrapy.extensions.logstats.LogStats’]

2020-05-14 15:51:15 [scrapy.middleware] INFO: Enabled downloader middlewares:

[‘scrapy.downloadermiddlewares.robotstxt.RobotsTxtMiddleware’,

‘scrapy.downloadermiddlewares.httpauth.HttpAuthMiddleware’,

‘scrapy.downloadermiddlewares.downloadtimeout.DownloadTimeoutMiddleware’,

‘scrapy.downloadermiddlewares.defaultheaders.DefaultHeadersMiddleware’,

‘scrapy.downloadermiddlewares.useragent.UserAgentMiddleware’,

‘scrapy.downloadermiddlewares.retry.RetryMiddleware’,

‘scrapy.downloadermiddlewares.redirect.MetaRefreshMiddleware’,

‘scrapy.downloadermiddlewares.httpcompression.HttpCompressionMiddleware’,

‘scrapy.downloadermiddlewares.redirect.RedirectMiddleware’,

‘scrapy.downloadermiddlewares.cookies.CookiesMiddleware’,

‘scrapy.downloadermiddlewares.httpproxy.HttpProxyMiddleware’,

‘scrapy.downloadermiddlewares.stats.DownloaderStats’]

2020-05-14 15:51:15 [scrapy.middleware] INFO: Enabled spider middlewares:

[‘scrapy.spidermiddlewares.httperror.HttpErrorMiddleware’,

‘scrapy.spidermiddlewares.offsite.OffsiteMiddleware’,

‘scrapy.spidermiddlewares.referer.RefererMiddleware’,

‘scrapy.spidermiddlewares.urllength.UrlLengthMiddleware’,

‘scrapy.spidermiddlewares.depth.DepthMiddleware’]

2020-05-14 15:51:15 [scrapy.middleware] INFO: Enabled item pipelines:

[]

2020-05-14 15:51:15 [scrapy.core.engine] INFO: Spider opened

2020-05-14 15:51:15 [scrapy.extensions.logstats] INFO: Crawled 0 pages (at 0 pages/min), scraped 0 items (at 0 items/min)

2020-05-14 15:51:15 [scrapy.extensions.telnet] INFO: Telnet console listening on 127.0.0.1:6023

2020-05-14 15:51:15 [scrapy.downloadermiddlewares.redirect] DEBUG: Redirecting (301) to <GET https://news.cnblogs.com/robots.txt> from <GET http://news.cnblogs.com/robots.txt>

2020-05-14 15:51:15 [scrapy.core.engine] DEBUG: Crawled (404) <GET https://news.cnblogs.com/robots.txt> (referer: None)

2020-05-14 15:51:15 [scrapy.downloadermiddlewares.redirect] DEBUG: Redirecting (301) to <GET https://news.cnblogs.com/> from <GET http://news.cnblogs.com/>

2020-05-14 15:51:15 [scrapy.core.engine] DEBUG: Crawled (200) <GET https://news.cnblogs.com/> (referer: None)

2020-05-14 15:51:15 [scrapy.core.engine] INFO: Closing spider (finished)

2020-05-14 15:51:15 [scrapy.statscollectors] INFO: Dumping Scrapy stats:

{‘downloader/request_bytes’: 880,

‘downloader/request_count’: 4,

‘downloader/request_method_count/GET’: 4,

‘downloader/response_bytes’: 17545,

‘downloader/response_count’: 4,

‘downloader/response_status_count/200’: 1,

‘downloader/response_status_count/301’: 2,

‘downloader/response_status_count/404’: 1,

‘finish_reason’: ‘finished’,

‘finish_time’: datetime.datetime(2020, 5, 14, 7, 51, 15, 935346),

‘log_count/DEBUG’: 4,

‘log_count/INFO’: 9,

‘response_received_count’: 2,

‘robotstxt/request_count’: 1,

‘robotstxt/response_count’: 1,

‘robotstxt/response_status_count/404’: 1,

‘scheduler/dequeued’: 2,

‘scheduler/dequeued/memory’: 2,

‘scheduler/enqueued’: 2,

‘scheduler/enqueued/memory’: 2,

‘start_time’: datetime.datetime(2020, 5, 14, 7, 51, 15, 340346)}

2020-05-14 15:51:15 [scrapy.core.engine] INFO: Spider closed (finished)

(Scrapy Env) I:\PythonHighLevelScrapy\notes\ScrapyPoroject\ArticleSpider>

正在回答 回答被采纳积分+3

1回答

- 参与学习 5831 人

- 解答问题 6293 个

带你彻底掌握Scrapy,用Django+Elasticsearch搭建搜索引擎

了解课程