java api测试失败

20180331修改:

老师好:这是我的代码:

package com.imooc.hadoop.hdfs;

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.fs.FileSystem;

import org.apache.hadoop.fs.Path;

import org.junit.After;

import org.junit.Before;

import org.junit.Test;

import java.net.URI;

/**

* Hadoop HDFS Java API 操作

*/

public class HDFSApp {

public static final String HDFS_PATH = "hdfs://192.168.140.134:8020";

FileSystem fileSystem = null;

Configuration configuration = null;

/**

* 创建HDFS目录

*/

@Test

public void mkdir() throws Exception {

fileSystem.mkdirs(new Path("/hdfsapi/test"));

}

@Before

public void setUp() throws Exception {

configuration = new Configuration();

fileSystem = FileSystem.get(new URI(HDFS_PATH),configuration, "omgitscyx");

System.out.println("HDFSApp.setUp");

}

@After

public void tearDown() throws Exception{

configuration = null;

fileSystem = null;

System.out.println("HDFSApp.tearDown");

}

}

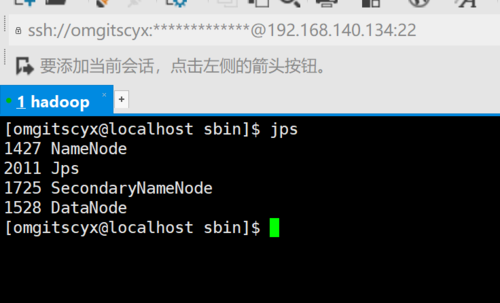

确认测试时候hdfs是活动的

-----------------------------------------下为原问题----------------------------------------------------------

老师好,我按照视频做java api 测试时遇到如下报错:

java.net.ConnectException: Call From DESKTOP-242D5J8/10.4.36.21 to 192.168.140.134:8020 failed on connection exception: java.net.ConnectException: Connection refused: no further information; For more details see: http://wiki.apache.org/hadoop/ConnectionRefused

at sun.reflect.NativeConstructorAccessorImpl.newInstance0(Native Method)

at sun.reflect.NativeConstructorAccessorImpl.newInstance(NativeConstructorAccessorImpl.java:62)

at sun.reflect.DelegatingConstructorAccessorImpl.newInstance(DelegatingConstructorAccessorImpl.java:45)

at java.lang.reflect.Constructor.newInstance(Constructor.java:423)

at org.apache.hadoop.net.NetUtils.wrapWithMessage(NetUtils.java:791)

at org.apache.hadoop.net.NetUtils.wrapException(NetUtils.java:731)

at org.apache.hadoop.ipc.Client.call(Client.java:1475)

at org.apache.hadoop.ipc.Client.call(Client.java:1408)

at org.apache.hadoop.ipc.ProtobufRpcEngine$Invoker.invoke(ProtobufRpcEngine.java:230)

at com.sun.proxy.$Proxy12.mkdirs(Unknown Source)

at org.apache.hadoop.hdfs.protocolPB.ClientNamenodeProtocolTranslatorPB.mkdirs(ClientNamenodeProtocolTranslatorPB.java:544)

at sun.reflect.NativeMethodAccessorImpl.invoke0(Native Method)

at sun.reflect.NativeMethodAccessorImpl.invoke(NativeMethodAccessorImpl.java:62)

at sun.reflect.DelegatingMethodAccessorImpl.invoke(DelegatingMethodAccessorImpl.java:43)

at java.lang.reflect.Method.invoke(Method.java:498)

at org.apache.hadoop.io.retry.RetryInvocationHandler.invokeMethod(RetryInvocationHandler.java:256)

at org.apache.hadoop.io.retry.RetryInvocationHandler.invoke(RetryInvocationHandler.java:104)

at com.sun.proxy.$Proxy13.mkdirs(Unknown Source)

at org.apache.hadoop.hdfs.DFSClient.primitiveMkdir(DFSClient.java:3082)

at org.apache.hadoop.hdfs.DFSClient.mkdirs(DFSClient.java:3049)

at org.apache.hadoop.hdfs.DistributedFileSystem$18.doCall(DistributedFileSystem.java:957)

at org.apache.hadoop.hdfs.DistributedFileSystem$18.doCall(DistributedFileSystem.java:953)

at org.apache.hadoop.fs.FileSystemLinkResolver.resolve(FileSystemLinkResolver.java:81)

at org.apache.hadoop.hdfs.DistributedFileSystem.mkdirsInternal(DistributedFileSystem.java:953)

at org.apache.hadoop.hdfs.DistributedFileSystem.mkdirs(DistributedFileSystem.java:946)

at org.apache.hadoop.fs.FileSystem.mkdirs(FileSystem.java:1861)

at com.imooc.hadoop.hdfs.HDFSApp.mkdir(HDFSApp.java:29)

at sun.reflect.NativeMethodAccessorImpl.invoke0(Native Method)

at sun.reflect.NativeMethodAccessorImpl.invoke(NativeMethodAccessorImpl.java:62)

at sun.reflect.DelegatingMethodAccessorImpl.invoke(DelegatingMethodAccessorImpl.java:43)

at java.lang.reflect.Method.invoke(Method.java:498)

at org.junit.runners.model.FrameworkMethod$1.runReflectiveCall(FrameworkMethod.java:45)

at org.junit.internal.runners.model.ReflectiveCallable.run(ReflectiveCallable.java:15)

at org.junit.runners.model.FrameworkMethod.invokeExplosively(FrameworkMethod.java:42)

at org.junit.internal.runners.statements.InvokeMethod.evaluate(InvokeMethod.java:20)

at org.junit.internal.runners.statements.RunBefores.evaluate(RunBefores.java:28)

at org.junit.internal.runners.statements.RunAfters.evaluate(RunAfters.java:30)

at org.junit.runners.ParentRunner.runLeaf(ParentRunner.java:263)

at org.junit.runners.BlockJUnit4ClassRunner.runChild(BlockJUnit4ClassRunner.java:68)

at org.junit.runners.BlockJUnit4ClassRunner.runChild(BlockJUnit4ClassRunner.java:47)

at org.junit.runners.ParentRunner$3.run(ParentRunner.java:231)

at org.junit.runners.ParentRunner$1.schedule(ParentRunner.java:60)

at org.junit.runners.ParentRunner.runChildren(ParentRunner.java:229)

at org.junit.runners.ParentRunner.access$000(ParentRunner.java:50)

at org.junit.runners.ParentRunner$2.evaluate(ParentRunner.java:222)

at org.junit.runners.ParentRunner.run(ParentRunner.java:300)

at org.junit.runner.JUnitCore.run(JUnitCore.java:157)

at com.intellij.junit4.JUnit4IdeaTestRunner.startRunnerWithArgs(JUnit4IdeaTestRunner.java:68)

at com.intellij.rt.execution.junit.IdeaTestRunner$Repeater.startRunnerWithArgs(IdeaTestRunner.java:47)

at com.intellij.rt.execution.junit.JUnitStarter.prepareStreamsAndStart(JUnitStarter.java:242)

at com.intellij.rt.execution.junit.JUnitStarter.main(JUnitStarter.java:70)

Caused by: java.net.ConnectException: Connection refused: no further information

at sun.nio.ch.SocketChannelImpl.checkConnect(Native Method)

at sun.nio.ch.SocketChannelImpl.finishConnect(SocketChannelImpl.java:717)

at org.apache.hadoop.net.SocketIOWithTimeout.connect(SocketIOWithTimeout.java:206)

at org.apache.hadoop.net.NetUtils.connect(NetUtils.java:530)

at org.apache.hadoop.net.NetUtils.connect(NetUtils.java:494)

at org.apache.hadoop.ipc.Client$Connection.setupConnection(Client.java:614)

at org.apache.hadoop.ipc.Client$Connection.setupIOstreams(Client.java:713)

at org.apache.hadoop.ipc.Client$Connection.access$2900(Client.java:375)

at org.apache.hadoop.ipc.Client.getConnection(Client.java:1524)

at org.apache.hadoop.ipc.Client.call(Client.java:1447)

... 44 more

经过检查我的虚拟机里hdfs是打开了,ip地址端口什么也没抄错,请问下是什么原因

正在回答

1回答

相似问题

登录后可查看更多问答,登录/注册