could only be replicated to 0 nodes instead of minReplication (=1).

您好,我用阿里云2核2G,CentOS Linux release 7.5.1804 (Core)

目前只安装的hadoop环境,防火墙是关闭状态,安全组规则全部端口都开放

在fileSystem.create()时候出现可以创建目录和文件,但是文件内容写入不了

“D:\Program Files\Java\jdk1.8.0_181\bin\java.exe” -ea -Didea.test.cyclic.buffer.size=1048576 “-javaagent:D:\Program Files\JetBrains\IntelliJ IDEA 2019.1.1\lib\idea_rt.jar=51635:D:\Program Files\JetBrains\IntelliJ IDEA 2019.1.1\bin” -Dfile.encoding=UTF-8 -classpath “D:\Program Files\JetBrains\IntelliJ IDEA 2019.1.1\lib\idea_rt.jar;D:\Program Files\JetBrains\IntelliJ IDEA 2019.1.1\plugins\junit\lib\junit-rt.jar;D:\Program Files\JetBrains\IntelliJ IDEA 2019.1.1\plugins\junit\lib\junit5-rt.jar;D:\Program Files\Java\jdk1.8.0_181\jre\lib\charsets.jar;D:\Program Files\Java\jdk1.8.0_181\jre\lib\deploy.jar;D:\Program Files\Java\jdk1.8.0_181\jre\lib\ext\access-bridge-64.jar;D:\Program Files\Java\jdk1.8.0_181\jre\lib\ext\cldrdata.jar;D:\Program Files\Java\jdk1.8.0_181\jre\lib\ext\dnsns.jar;D:\Program Files\Java\jdk1.8.0_181\jre\lib\ext\jaccess.jar;D:\Program Files\Java\jdk1.8.0_181\jre\lib\ext\jfxrt.jar;D:\Program Files\Java\jdk1.8.0_181\jre\lib\ext\localedata.jar;D:\Program Files\Java\jdk1.8.0_181\jre\lib\ext\nashorn.jar;D:\Program Files\Java\jdk1.8.0_181\jre\lib\ext\sunec.jar;D:\Program Files\Java\jdk1.8.0_181\jre\lib\ext\sunjce_provider.jar;D:\Program Files\Java\jdk1.8.0_181\jre\lib\ext\sunmscapi.jar;D:\Program Files\Java\jdk1.8.0_181\jre\lib\ext\sunpkcs11.jar;D:\Program Files\Java\jdk1.8.0_181\jre\lib\ext\zipfs.jar;D:\Program Files\Java\jdk1.8.0_181\jre\lib\javaws.jar;D:\Program Files\Java\jdk1.8.0_181\jre\lib\jce.jar;D:\Program Files\Java\jdk1.8.0_181\jre\lib\jfr.jar;D:\Program Files\Java\jdk1.8.0_181\jre\lib\jfxswt.jar;D:\Program Files\Java\jdk1.8.0_181\jre\lib\jsse.jar;D:\Program Files\Java\jdk1.8.0_181\jre\lib\management-agent.jar;D:\Program Files\Java\jdk1.8.0_181\jre\lib\plugin.jar;D:\Program Files\Java\jdk1.8.0_181\jre\lib\resources.jar;D:\Program Files\Java\jdk1.8.0_181\jre\lib\rt.jar;E:\test-workspaces\hadooptrainv2\target\test-classes;E:\test-workspaces\hadooptrainv2\target\classes;D:\local\repository\junit\junit\4.11\junit-4.11.jar;D:\local\repository\org\hamcrest\hamcrest-core\1.3\hamcrest-core-1.3.jar;D:\local\repository\org\apache\hadoop\hadoop-client\2.6.0-cdh5.15.1\hadoop-client-2.6.0-cdh5.15.1.jar;D:\local\repository\org\apache\hadoop\hadoop-common\2.6.0-cdh5.15.1\hadoop-common-2.6.0-cdh5.15.1.jar;D:\local\repository\com\google\guava\guava\11.0.2\guava-11.0.2.jar;D:\local\repository\commons-cli\commons-cli\1.2\commons-cli-1.2.jar;D:\local\repository\org\apache\commons\commons-math3\3.1.1\commons-math3-3.1.1.jar;D:\local\repository\xmlenc\xmlenc\0.52\xmlenc-0.52.jar;D:\local\repository\commons-httpclient\commons-httpclient\3.1\commons-httpclient-3.1.jar;D:\local\repository\commons-codec\commons-codec\1.4\commons-codec-1.4.jar;D:\local\repository\commons-io\commons-io\2.4\commons-io-2.4.jar;D:\local\repository\commons-net\commons-net\3.1\commons-net-3.1.jar;D:\local\repository\commons-collections\commons-collections\3.2.2\commons-collections-3.2.2.jar;D:\local\repository\commons-logging\commons-logging\1.1.3\commons-logging-1.1.3.jar;D:\local\repository\log4j\log4j\1.2.17\log4j-1.2.17.jar;D:\local\repository\commons-lang\commons-lang\2.6\commons-lang-2.6.jar;D:\local\repository\commons-configuration\commons-configuration\1.6\commons-configuration-1.6.jar;D:\local\repository\commons-digester\commons-digester\1.8\commons-digester-1.8.jar;D:\local\repository\commons-beanutils\commons-beanutils\1.7.0\commons-beanutils-1.7.0.jar;D:\local\repository\commons-beanutils\commons-beanutils-core\1.8.0\commons-beanutils-core-1.8.0.jar;D:\local\repository\org\slf4j\slf4j-api\1.7.5\slf4j-api-1.7.5.jar;D:\local\repository\org\slf4j\slf4j-log4j12\1.7.5\slf4j-log4j12-1.7.5.jar;D:\local\repository\org\codehaus\jackson\jackson-core-asl\1.8.8\jackson-core-asl-1.8.8.jar;D:\local\repository\org\codehaus\jackson\jackson-mapper-asl\1.8.8\jackson-mapper-asl-1.8.8.jar;D:\local\repository\org\apache\avro\avro\1.7.6-cdh5.15.1\avro-1.7.6-cdh5.15.1.jar;D:\local\repository\com\thoughtworks\paranamer\paranamer\2.3\paranamer-2.3.jar;D:\local\repository\org\xerial\snappy\snappy-java\1.0.4.1\snappy-java-1.0.4.1.jar;D:\local\repository\com\google\protobuf\protobuf-java\2.5.0\protobuf-java-2.5.0.jar;D:\local\repository\com\google\code\gson\gson\2.2.4\gson-2.2.4.jar;D:\local\repository\org\apache\hadoop\hadoop-auth\2.6.0-cdh5.15.1\hadoop-auth-2.6.0-cdh5.15.1.jar;D:\local\repository\org\apache\httpcomponents\httpclient\4.2.5\httpclient-4.2.5.jar;D:\local\repository\org\apache\httpcomponents\httpcore\4.2.4\httpcore-4.2.4.jar;D:\local\repository\org\apache\directory\server\apacheds-kerberos-codec\2.0.0-M15\apacheds-kerberos-codec-2.0.0-M15.jar;D:\local\repository\org\apache\directory\server\apacheds-i18n\2.0.0-M15\apacheds-i18n-2.0.0-M15.jar;D:\local\repository\org\apache\directory\api\api-asn1-api\1.0.0-M20\api-asn1-api-1.0.0-M20.jar;D:\local\repository\org\apache\directory\api\api-util\1.0.0-M20\api-util-1.0.0-M20.jar;D:\local\repository\org\apache\curator\curator-framework\2.7.1\curator-framework-2.7.1.jar;D:\local\repository\org\apache\curator\curator-client\2.7.1\curator-client-2.7.1.jar;D:\local\repository\org\apache\curator\curator-recipes\2.7.1\curator-recipes-2.7.1.jar;D:\local\repository\com\google\code\findbugs\jsr305\3.0.0\jsr305-3.0.0.jar;D:\local\repository\org\apache\htrace\htrace-core4\4.0.1-incubating\htrace-core4-4.0.1-incubating.jar;D:\local\repository\org\apache\zookeeper\zookeeper\3.4.5-cdh5.15.1\zookeeper-3.4.5-cdh5.15.1.jar;D:\local\repository\org\apache\commons\commons-compress\1.4.1\commons-compress-1.4.1.jar;D:\local\repository\org\tukaani\xz\1.0\xz-1.0.jar;D:\local\repository\org\apache\hadoop\hadoop-hdfs\2.6.0-cdh5.15.1\hadoop-hdfs-2.6.0-cdh5.15.1.jar;D:\local\repository\org\mortbay\jetty\jetty-util\6.1.26.cloudera.4\jetty-util-6.1.26.cloudera.4.jar;D:\local\repository\io\netty\netty\3.10.5.Final\netty-3.10.5.Final.jar;D:\local\repository\io\netty\netty-all\4.0.23.Final\netty-all-4.0.23.Final.jar;D:\local\repository\xerces\xercesImpl\2.9.1\xercesImpl-2.9.1.jar;D:\local\repository\xml-apis\xml-apis\1.3.04\xml-apis-1.3.04.jar;D:\local\repository\org\fusesource\leveldbjni\leveldbjni-all\1.8\leveldbjni-all-1.8.jar;D:\local\repository\org\apache\hadoop\hadoop-mapreduce-client-app\2.6.0-cdh5.15.1\hadoop-mapreduce-client-app-2.6.0-cdh5.15.1.jar;D:\local\repository\org\apache\hadoop\hadoop-mapreduce-client-common\2.6.0-cdh5.15.1\hadoop-mapreduce-client-common-2.6.0-cdh5.15.1.jar;D:\local\repository\org\apache\hadoop\hadoop-yarn-client\2.6.0-cdh5.15.1\hadoop-yarn-client-2.6.0-cdh5.15.1.jar;D:\local\repository\org\apache\hadoop\hadoop-yarn-server-common\2.6.0-cdh5.15.1\hadoop-yarn-server-common-2.6.0-cdh5.15.1.jar;D:\local\repository\org\apache\hadoop\hadoop-mapreduce-client-shuffle\2.6.0-cdh5.15.1\hadoop-mapreduce-client-shuffle-2.6.0-cdh5.15.1.jar;D:\local\repository\org\apache\hadoop\hadoop-yarn-api\2.6.0-cdh5.15.1\hadoop-yarn-api-2.6.0-cdh5.15.1.jar;D:\local\repository\org\apache\hadoop\hadoop-mapreduce-client-core\2.6.0-cdh5.15.1\hadoop-mapreduce-client-core-2.6.0-cdh5.15.1.jar;D:\local\repository\org\apache\hadoop\hadoop-yarn-common\2.6.0-cdh5.15.1\hadoop-yarn-common-2.6.0-cdh5.15.1.jar;D:\local\repository\javax\xml\bind\jaxb-api\2.2.2\jaxb-api-2.2.2.jar;D:\local\repository\javax\xml\stream\stax-api\1.0-2\stax-api-1.0-2.jar;D:\local\repository\javax\activation\activation\1.1\activation-1.1.jar;D:\local\repository\javax\servlet\servlet-api\2.5\servlet-api-2.5.jar;D:\local\repository\com\sun\jersey\jersey-core\1.9\jersey-core-1.9.jar;D:\local\repository\com\sun\jersey\jersey-client\1.9\jersey-client-1.9.jar;D:\local\repository\org\codehaus\jackson\jackson-jaxrs\1.8.8\jackson-jaxrs-1.8.8.jar;D:\local\repository\org\codehaus\jackson\jackson-xc\1.8.8\jackson-xc-1.8.8.jar;D:\local\repository\org\apache\hadoop\hadoop-mapreduce-client-jobclient\2.6.0-cdh5.15.1\hadoop-mapreduce-client-jobclient-2.6.0-cdh5.15.1.jar;D:\local\repository\org\apache\hadoop\hadoop-aws\2.6.0-cdh5.15.1\hadoop-aws-2.6.0-cdh5.15.1.jar;D:\local\repository\com\amazonaws\aws-java-sdk-bundle\1.11.134\aws-java-sdk-bundle-1.11.134.jar;D:\local\repository\com\fasterxml\jackson\core\jackson-core\2.2.3\jackson-core-2.2.3.jar;D:\local\repository\com\fasterxml\jackson\core\jackson-databind\2.2.3\jackson-databind-2.2.3.jar;D:\local\repository\com\fasterxml\jackson\core\jackson-annotations\2.2.3\jackson-annotations-2.2.3.jar;D:\local\repository\org\apache\hadoop\hadoop-annotations\2.6.0-cdh5.15.1\hadoop-annotations-2.6.0-cdh5.15.1.jar” com.intellij.rt.execution.junit.JUnitStarter -ideVersion5 -junit4 com.zmj.bigdata.hadoop.hdfs.HDFSApp,create

—setUp()—

2019-10-17 14:39:35,502 WARN [org.apache.hadoop.hdfs.DFSClient] - Abandoning BP-1287366486-172.17.27.157-1571293485330:blk_1073741827_1003

2019-10-17 14:39:35,612 WARN [org.apache.hadoop.hdfs.DFSClient] - Excluding datanode DatanodeInfoWithStorage[172.17.27.157:50010,DS-09dcda8b-eb85-4936-985d-0d40cf61b9a8,DISK]

2019-10-17 14:39:35,777 WARN [org.apache.hadoop.hdfs.DFSClient] - DataStreamer Exception

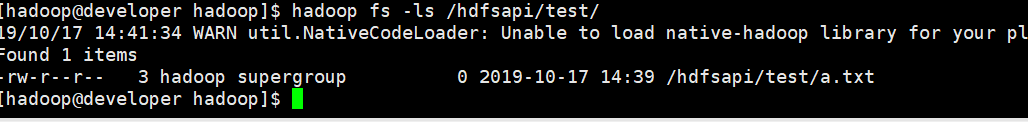

org.apache.hadoop.ipc.RemoteException(java.io.IOException): File /hdfsapi/test/a.txt could only be replicated to 0 nodes instead of minReplication (=1). There are 1 datanode(s) running and 1 node(s) are excluded in this operation.

at org.apache.hadoop.hdfs.server.blockmanagement.BlockManager.chooseTarget4NewBlock(BlockManager.java:1719)

at org.apache.hadoop.hdfs.server.namenode.FSNamesystem.getAdditionalBlock(FSNamesystem.java:3508)

at org.apache.hadoop.hdfs.server.namenode.NameNodeRpcServer.addBlock(NameNodeRpcServer.java:694)

at org.apache.hadoop.hdfs.server.namenode.AuthorizationProviderProxyClientProtocol.addBlock(AuthorizationProviderProxyClientProtocol.java:219)

at org.apache.hadoop.hdfs.protocolPB.ClientNamenodeProtocolServerSideTranslatorPB.addBlock(ClientNamenodeProtocolServerSideTranslatorPB.java:507)

at org.apache.hadoop.hdfs.protocol.proto.ClientNamenodeProtocolProtos$ClientNamenodeProtocol2.callBlockingMethod(ClientNamenodeProtocolProtos.java)atorg.apache.hadoop.ipc.ProtobufRpcEngine2.callBlockingMethod(ClientNamenodeProtocolProtos.java) at org.apache.hadoop.ipc.ProtobufRpcEngine2.callBlockingMethod(ClientNamenodeProtocolProtos.java)atorg.apache.hadoop.ipc.ProtobufRpcEngineServerProtoBufRpcInvoker.call(ProtobufRpcEngine.java:617)atorg.apache.hadoop.ipc.RPCProtoBufRpcInvoker.call(ProtobufRpcEngine.java:617) at org.apache.hadoop.ipc.RPCProtoBufRpcInvoker.call(ProtobufRpcEngine.java:617)atorg.apache.hadoop.ipc.RPCServer.call(RPC.java:1073)

at org.apache.hadoop.ipc.Server$Handler1.run(Server.java:2281)atorg.apache.hadoop.ipc.Server1.run(Server.java:2281) at org.apache.hadoop.ipc.Server1.run(Server.java:2281)atorg.apache.hadoop.ipc.ServerHandler1.run(Server.java:2277)atjava.security.AccessController.doPrivileged(NativeMethod)atjavax.security.auth.Subject.doAs(Subject.java:422)atorg.apache.hadoop.security.UserGroupInformation.doAs(UserGroupInformation.java:1924)atorg.apache.hadoop.ipc.Server1.run(Server.java:2277) at java.security.AccessController.doPrivileged(Native Method) at javax.security.auth.Subject.doAs(Subject.java:422) at org.apache.hadoop.security.UserGroupInformation.doAs(UserGroupInformation.java:1924) at org.apache.hadoop.ipc.Server1.run(Server.java:2277)atjava.security.AccessController.doPrivileged(NativeMethod)atjavax.security.auth.Subject.doAs(Subject.java:422)atorg.apache.hadoop.security.UserGroupInformation.doAs(UserGroupInformation.java:1924)atorg.apache.hadoop.ipc.ServerHandler.run(Server.java:2275)

at org.apache.hadoop.ipc.Client.call(Client.java:1504)

at org.apache.hadoop.ipc.Client.call(Client.java:1441)

at org.apache.hadoop.ipc.ProtobufRpcEngine$Invoker.invoke(ProtobufRpcEngine.java:230)

at com.sun.proxy.$Proxy13.addBlock(Unknown Source)

at org.apache.hadoop.hdfs.protocolPB.ClientNamenodeProtocolTranslatorPB.addBlock(ClientNamenodeProtocolTranslatorPB.java:425)

at sun.reflect.NativeMethodAccessorImpl.invoke0(Native Method)

at sun.reflect.NativeMethodAccessorImpl.invoke(NativeMethodAccessorImpl.java:62)

at sun.reflect.DelegatingMethodAccessorImpl.invoke(DelegatingMethodAccessorImpl.java:43)

at java.lang.reflect.Method.invoke(Method.java:498)

at org.apache.hadoop.io.retry.RetryInvocationHandler.invokeMethod(RetryInvocationHandler.java:258)

at org.apache.hadoop.io.retry.RetryInvocationHandler.invoke(RetryInvocationHandler.java:104)

at com.sun.proxy.$Proxy14.addBlock(Unknown Source)

at org.apache.hadoop.hdfs.DFSOutputStream$DataStreamer.locateFollowingBlock(DFSOutputStream.java:1860)

at org.apache.hadoop.hdfs.DFSOutputStream$DataStreamer.nextBlockOutputStream(DFSOutputStream.java:1656)

at org.apache.hadoop.hdfs.DFSOutputStream$DataStreamer.run(DFSOutputStream.java:790)

—tearDown()—